Android App created by Processing

with sound

Processing allows you to compile a Processing program into an Android app for phones or tablets.

This program demonstrates playing multiple sound files.

It works with Processing 3.3.7 as of May 27, 2018.

With help from 'kfRajer' on the discourse.processing.org/c/processing-android forum

Here's a quick demo video of a Processing Game that has been ported to Android.

Here's how to install Android mode in Processing. Here's a quick getting started demo sketch.

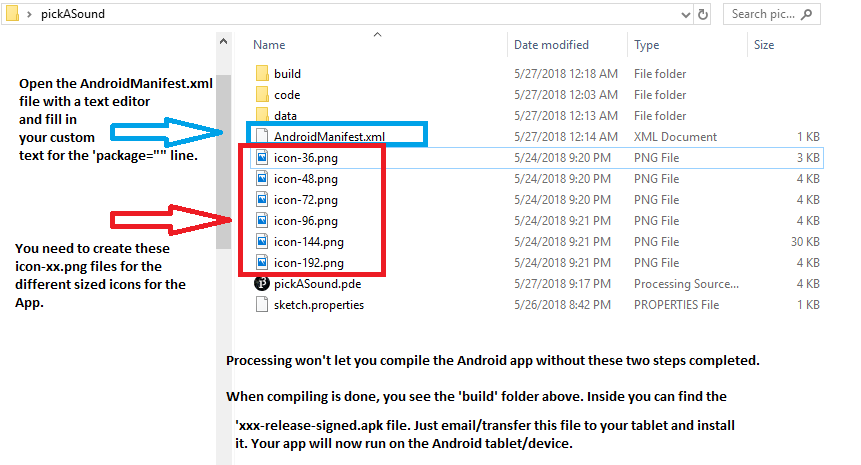

You need to create a set of custom icons for your app. The Distribution page tells you to create files called, 'icon-36.png', 'icon-48.png', 'icon-72.png', 'icon-96.png', 'icon-144.png' and 'icon-192.png'.

These correspond to different sized icons at 36x36 pixels, 72x72 pixels etc.

I just opened up one of my game picture files and kept pasting it into a .png graphics editor (paint, Photoshop etc) and resized the canvas and graphic to the different corresponding sizes and then named them as specified. See the picture below:

The icon files go in your SKETCH folder at the root level.

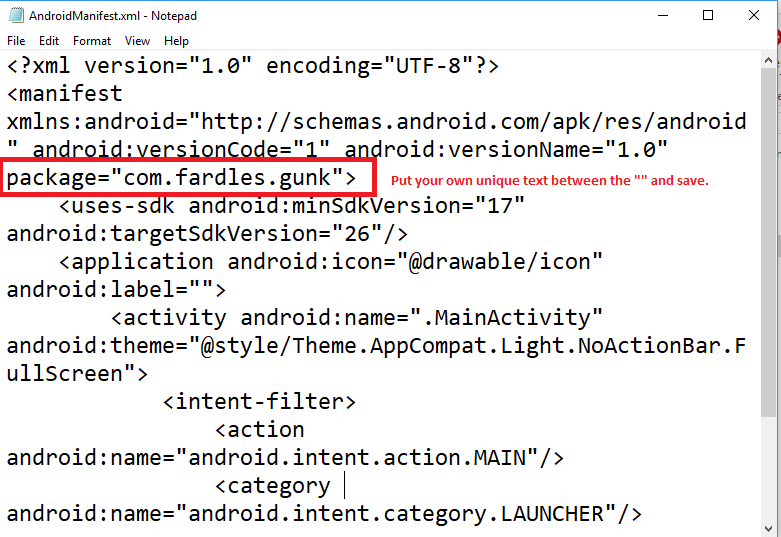

The instructions also tell you to update the 'AndroidManifest.xml' file to include a website.

No website is needed. Just open the .xml file with a text editor and put some text (ex 'myapp') in between the double quotes in the package="" line and save the file.

Here's how to create the App for your device (Distribution)

Clarifications to the Processing Android instructions for 'Distribution':

The website makes it sound like you must load your project to the Google Play Store to download it.

It's not necessary. The 'Export Signed Package' process creates some *.apk build files that you just load and install on your tablet and your app will run. (install the '...release_signed.apk' file on your device.)

Here's a project that will play four .mp3 files at the press of a button. They also can play over each other so multi-threading is taken care of by the Android MediaPlayer library. Pretty slick!

Just substitute your own .mp3, .wav etc files in the 'OpenFd' lines. Make sure the sound files are in the data folder.

// play several sounds simultaneously

// works with Processing 3.3.7 as of May 27 2018

// PHEW!

// 2018 - Gord Payne - with help from 'kfrajer' on the discourse.processing.org/c/processing-android forum

import android.media.MediaPlayer;

import android.content.res.AssetFileDescriptor;

import android.content.Context;

import android.app.Activity;

MediaPlayer s1, s2, s3, s4; // for sound MediaPlayer objects

// these two objects determine the file access path to the sound files

Context context;

Activity act;

// 4 file path descriptors

AssetFileDescriptor af1, af2, af3, af4;

void setup() {

act = this.getActivity();

context = act.getApplicationContext();

try {

s1 = new MediaPlayer();

s2 = new MediaPlayer();

s3 = new MediaPlayer();

s4 = new MediaPlayer();

// all your .mp3 or other sound files are in the 'data' folder in your project folder

af1 = context.getAssets().openFd("fogleg09.mp3");

af2 = context.getAssets().openFd("bd04.mp3");

af3 = context.getAssets().openFd("bugs11.mp3");

af4 = context.getAssets().openFd("daffy10.mp3");

// the offset and length tell us where the file starts and how long it is.

// if these parameters are not specified, all sounds in the data folder will be played in sequence

// even if you only want one

s1.setDataSource(af1.getFileDescriptor(), af1.getStartOffset(), af1.getLength());

s2.setDataSource(af2.getFileDescriptor(), af2.getStartOffset(), af2.getLength());

s3.setDataSource(af3.getFileDescriptor(), af3.getStartOffset(), af3.getLength());

s4.setDataSource(af4.getFileDescriptor(), af4.getStartOffset(), af4.getLength());

s1.prepare();// prepare the sound for playing/usage

s2.prepare();

s3.prepare();

s4.prepare();

}

catch(IOException e) {

// put nothing here unless you want to print out a 'file not found' exception. Not useful unless you're testing your app in USB Emulator mode on your Android device.

}

// draw some buttons to mousePress on screen

fill(200, 0, 0); // red

rect(100, 100, 50, 50);

fill(0, 200, 0);// green

rect(100, 200, 50, 50);

fill(0, 0, 200);// blue

rect(200, 100, 50, 50);

fill(200, 0, 200);// magenta

rect(200, 200, 50, 50);

};

void draw() {

}

void mousePressed() {

// check which screen zone you picked and play that sound

if ((mouseX>100)&&(mouseX<150)&&(mouseY>100)&&(mouseY<150)) {

s1.start();

}

if ((mouseX>100)&&(mouseX<150)&&(mouseY>200)&&(mouseY<250)) {

s2.start();

}

if ((mouseX>200)&&(mouseX<250)&&(mouseY>100)&&(mouseY<150)) {

s3.start();

}

if ((mouseX>200)&&(mouseX<250)&&(mouseY>200)&&(mouseY<250)) {

s4.start();

}

}

// housekeeping

public void pause() {

super.pause();

if (s1 !=null) {

s1.release();

s1 = null;

}

if (s2 !=null) {

s2.release();

s2 = null;

}

if (s3 !=null) {

s3.release();

s1 = null;

}

if (s4 !=null) {

s4.release();

s4 = null;

}

};

public void stop() {

super.stop();

if (s1 !=null) {

s1.release();

s1 = null;

}

if (s2 !=null) {

s2.release();

s2 = null;

}

if (s3 !=null) {

s3.release();

s3 = null;

}

if (s4 !=null) {

s4.release();

s4 = null;

}

};

// works with Processing 3.3.7 as of May 27 2018

// PHEW!

// 2018 - Gord Payne - with help from 'kfrajer' on the discourse.processing.org/c/processing-android forum

import android.media.MediaPlayer;

import android.content.res.AssetFileDescriptor;

import android.content.Context;

import android.app.Activity;

MediaPlayer s1, s2, s3, s4; // for sound MediaPlayer objects

// these two objects determine the file access path to the sound files

Context context;

Activity act;

// 4 file path descriptors

AssetFileDescriptor af1, af2, af3, af4;

void setup() {

act = this.getActivity();

context = act.getApplicationContext();

try {

s1 = new MediaPlayer();

s2 = new MediaPlayer();

s3 = new MediaPlayer();

s4 = new MediaPlayer();

// all your .mp3 or other sound files are in the 'data' folder in your project folder

af1 = context.getAssets().openFd("fogleg09.mp3");

af2 = context.getAssets().openFd("bd04.mp3");

af3 = context.getAssets().openFd("bugs11.mp3");

af4 = context.getAssets().openFd("daffy10.mp3");

// the offset and length tell us where the file starts and how long it is.

// if these parameters are not specified, all sounds in the data folder will be played in sequence

// even if you only want one

s1.setDataSource(af1.getFileDescriptor(), af1.getStartOffset(), af1.getLength());

s2.setDataSource(af2.getFileDescriptor(), af2.getStartOffset(), af2.getLength());

s3.setDataSource(af3.getFileDescriptor(), af3.getStartOffset(), af3.getLength());

s4.setDataSource(af4.getFileDescriptor(), af4.getStartOffset(), af4.getLength());

s1.prepare();// prepare the sound for playing/usage

s2.prepare();

s3.prepare();

s4.prepare();

}

catch(IOException e) {

// put nothing here unless you want to print out a 'file not found' exception. Not useful unless you're testing your app in USB Emulator mode on your Android device.

}

// draw some buttons to mousePress on screen

fill(200, 0, 0); // red

rect(100, 100, 50, 50);

fill(0, 200, 0);// green

rect(100, 200, 50, 50);

fill(0, 0, 200);// blue

rect(200, 100, 50, 50);

fill(200, 0, 200);// magenta

rect(200, 200, 50, 50);

};

void draw() {

}

void mousePressed() {

// check which screen zone you picked and play that sound

if ((mouseX>100)&&(mouseX<150)&&(mouseY>100)&&(mouseY<150)) {

s1.start();

}

if ((mouseX>100)&&(mouseX<150)&&(mouseY>200)&&(mouseY<250)) {

s2.start();

}

if ((mouseX>200)&&(mouseX<250)&&(mouseY>100)&&(mouseY<150)) {

s3.start();

}

if ((mouseX>200)&&(mouseX<250)&&(mouseY>200)&&(mouseY<250)) {

s4.start();

}

}

// housekeeping

public void pause() {

super.pause();

if (s1 !=null) {

s1.release();

s1 = null;

}

if (s2 !=null) {

s2.release();

s2 = null;

}

if (s3 !=null) {

s3.release();

s1 = null;

}

if (s4 !=null) {

s4.release();

s4 = null;

}

};

public void stop() {

super.stop();

if (s1 !=null) {

s1.release();

s1 = null;

}

if (s2 !=null) {

s2.release();

s2 = null;

}

if (s3 !=null) {

s3.release();

s3 = null;

}

if (s4 !=null) {

s4.release();

s4 = null;

}

};